Design Security for Data Policies and Standards – Keeping Data Safe and Secure

When you begin thinking about security, many of the requirements will be driven by the kind of data you have. Is the data personally identifiable information (PII), such as a name, email address, or physical address, that is stored, or does the data consist of historical THETA brain wave readings? In both scenarios you would want some form of protection from bad actors who could destroy or steal the data. The amount of protection you need should be identified by a data security standard. The popular Payment Card Industry (PCI) standard seeks to define the kinds of security mechanisms required for companies who want to transmit, process, and store payment information. There are numerous varieties of PCI that can be helpful as a baseline for defining your own data security standards based on the type of data your data analytics solution ingests, transforms, and exposes. For example, the standard might identify the minimum version of TLS that consumers must use when consuming your data. Further examples of data security standards are that all data columns must be associated with a sensitivity level, or that all data that contains PII must be purged after 120 days.

A data security policy contains the data security standards component along with numerous other sections that pertain to security. A data security policy can be used to identify security roles and responsibilities throughout your company so that it is clear who is responsible for what. For example, what aspects of data security does a certified Data Engineer Associate have? At what point does data security merge into the role of a Security Engineer? A data security policy also accounts for the procedures when there are security violations or incidents. How to classify data, how to manage access to data, the encryption of data, and the management and disposal of data are all components that make up a data security policy. Some basic security principals—such as that there be no access to customer data by default and to always grant the lowest level of privileges required to complete the task—are good policies to abide by. Most of these security aspects are covered in more detail later in this chapter. After completing this chapter, you will be able to contribute to the creation of a data security policy and strategy.

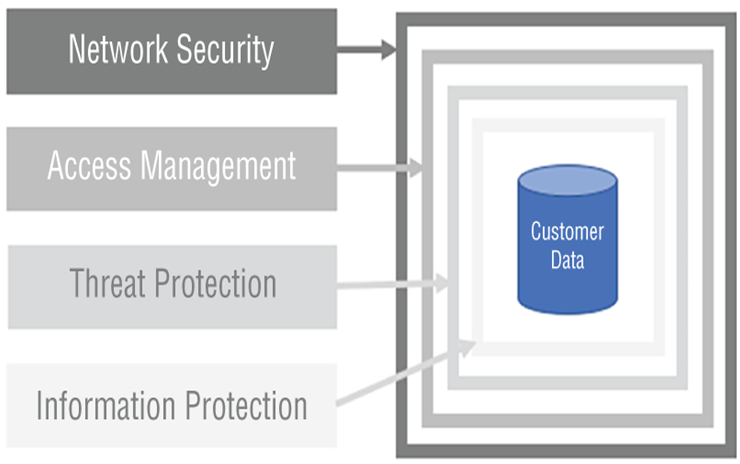

As you begin the effort to describe and design your data security model, consider approaching it from a layered perspective. Figure 8.1 represents a layered security model. The first layer focuses on network security. In this chapter you will learn about virtual networks (VNets), network security groups (NSGs), firewalls, and private endpoints, each of which provides security at the networking layer.

FIGURE 8.1 Layered security

The next layer is the access management layer. This layer has to do with authentication and authorization, where the former confirms you are who you say and the latter validates that you are allowed to access the resource. Common tools on Azure to validate that a person is who they claim to be include Azure Active Directory (Azure AD), SQL authentication, and Windows Authentication (Kerberos), which is in preview at the time of writing. Managing access to resources after authentication is successful is implemented through role assignments. A common tool for this on Azure is role‐based access control (RBAC). Many additional products, features, and concepts apply within this area, such as managed identities, Azure Key Vault, service principals, access control lists (ACLs), single sign‐on (SSO), and the least privilege principle. Each of these will be described in more detail in the following sections.

The kind of business a company performs dictates the kind of data that is collected and stored. Companies that work with governments or financial institutions have a higher probability of attempted data theft than companies that measure brain waves, for example. So, the threat of a security breach is greater for companies with high‐value data, which means they need to take greater actions to prevent most forms of malicious behaviors. To start with, performing vulnerability assessments and attack simulations would help find locations in your security that have known weaknesses. In parallel, enabling threat detection, virus scanners, logging used for performing audits, and traceability will reduce the likelihood of long‐term and serious outages caused by exploitation. Microsoft Defender for Cloud can be used as the hub for viewing and analyzing your security logs.

The last layer of security, information protection, is applied to the data itself. This layer includes concepts such as data encryption, which is typically applied while the data is not being used (encryption‐at‐rest) and while the data is moving from one location to another (encryption‐in‐transit). Data masking, the labeling of sensitive information, and logging who is accessing the data and how often, are additional techniques for protecting your data at this layer.

Table 8.1 summarizes the security‐related capabilities of various Azure products.

TABLE 8.1 Azure data product security support

| Feature | Azure SQL Database | Azure Synapse Analytics | Azure Data Explorer | Azure Databricks | Azure Cosmos DB |

| Authentication | SQL / Azure AD | SQL / Azure AD | Azure AD | Tokens / Azure AD | DB users / Azure AD |

| Dynamic masking | Yes | Yes | Yes | Yes | Yes |

| Encryption‐at‐rest | Yes | Yes | Yes | Yes | Yes |

| Row‐level security | Yes | Yes | No | Yes | No |

| Firewall | Yes | Yes | Yes | Yes | Yes |

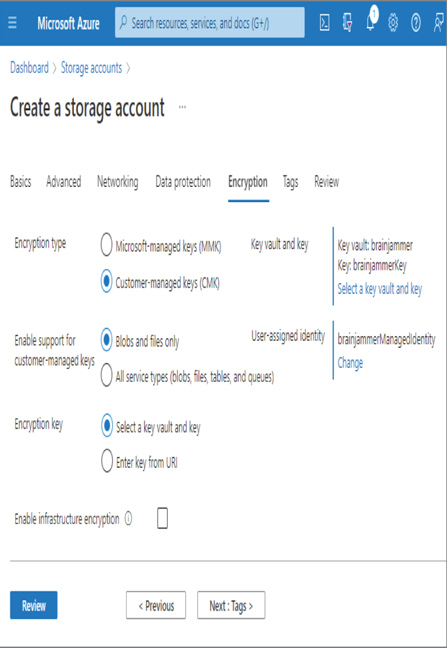

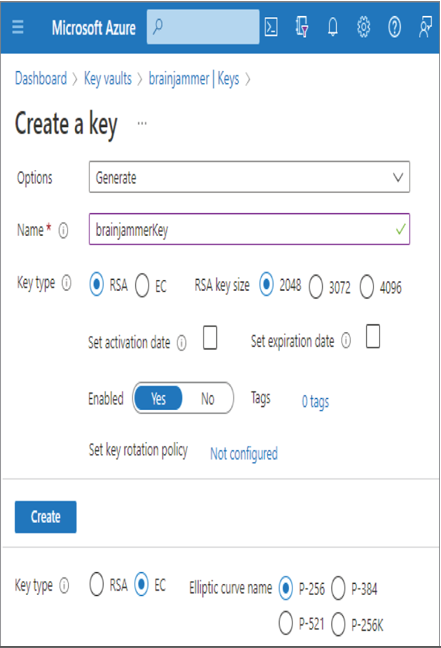

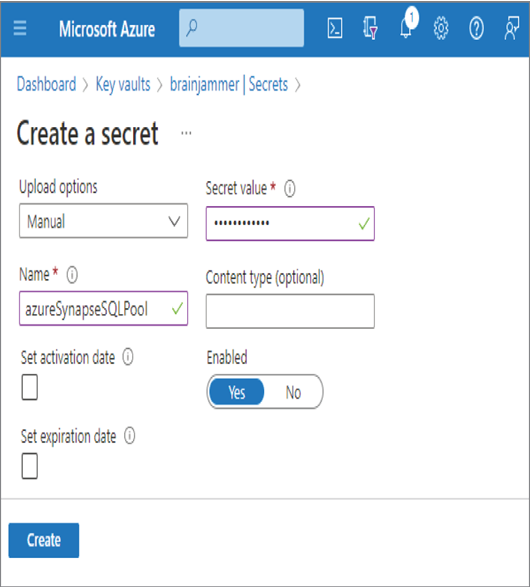

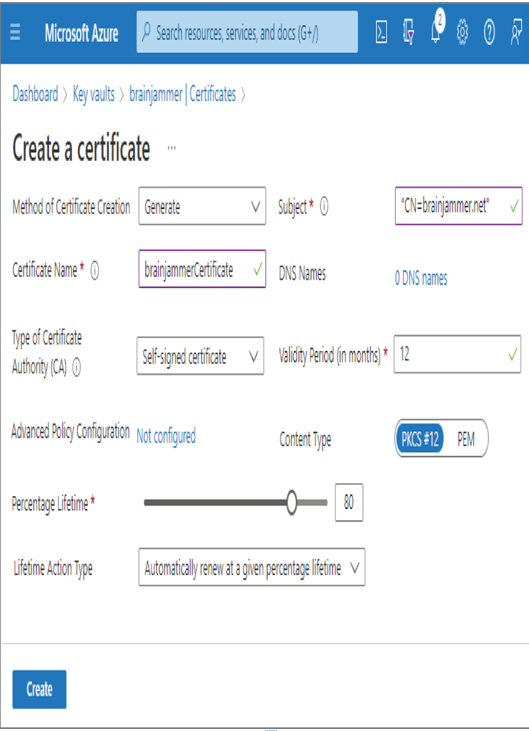

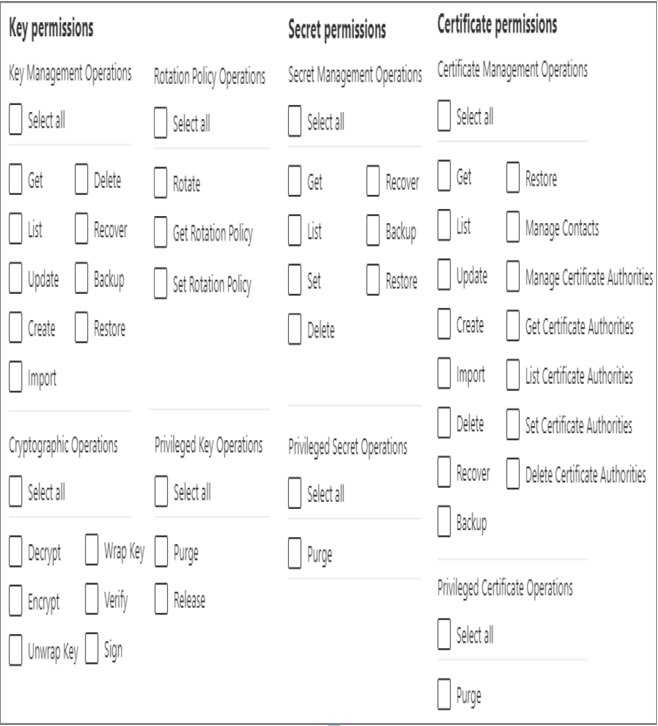

Azure data products enable you to configure each layer of the security model. The Azure platform provides many more features and capabilities to help monitor, manage, and maintain the security component of your data analytics solution. The remainder of this chapter provides details about these features and capabilities. But before you continue, complete Exercise 8.1, where you will provision an Azure Key Vault resource. Azure Key Vault is a solution that helps you securely store secrets, keys, and certificates. Azure Key Vault comes with two tiers, Standard and Premium, where the primary difference has to do with hardware security module (HSM) protected keys. HSM is a key protection method, which is a physical device dedicated to performing encryption, key management, authentication, and more. HSM is available in the Premium tier only. A software‐based key protection method is employed when a Standard tier is utilized. HSM provides the highest level of security and performance and is often required to meet compliance regulations. This product plays a very significant role in security, so learning some details about it before you continue will increase your comprehension and broaden your perspective.